This is another short test in the vain of whether or not there is a correlation between vocabulary and language level especially in the way of a reliable predictor. Here we take 60 random sentences from each level in the CLC and then an average % of types through text inspector with no manual changing. Surprisingly “Write and Improve” predicted all 3 sets of B1 random concordances were C1. It also guessed that most B2 sentences were C2. That is a massive difference.

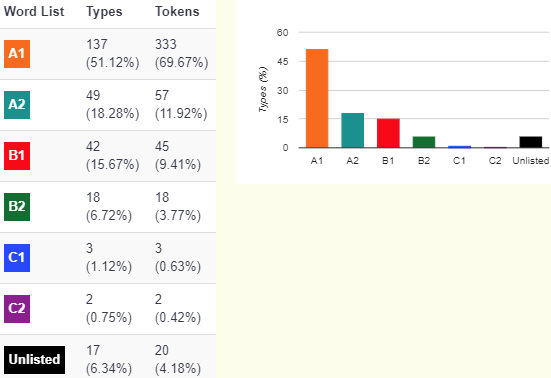

Sadly, my own hypotheses have also been destroyed! There is no improvement in the vocabulary from B1 to B2 when looking at the proportion (If anything there was a slight drop…) This being a short test of about 1500 words per level. It should have shown something more significant. As I found in other tests, the most significant difference is the sentence length. This may be masking more words at a higher level. I am not claiming that higher-level vocabulary doesn’t usually exist in the output of higher-level students. I am claiming that looking at one text of under 1500 words is not enough to distinguish between B1 and B2 by looking at automatically tagged vocabulary. The difference between a B1 and C1 student, however, is quite clear:

Still, we can get some profile information differences out of these pies. Especially if we imagine what a 100-word paragraph would look like between these two levels. We can also answer questions like how many B1 EVP vocabulary items actually appear in one piece of B1 level writing. The answer to this is 10. That is a tenth of their paragraph will contain B1 words. If we extend this with a previous study where I compared the word frequency in native speaker corpora, we can say that B1 vocabulary revolves around the 3000th most frequent words. In other words, a B1 writer will produce about 10 vocabulary items that are evidence of having a vocabulary range of around 3000 words. In addition, that B1 learner will also use a few words in that 100-word paragraph that may come from around the 4000th-word range.

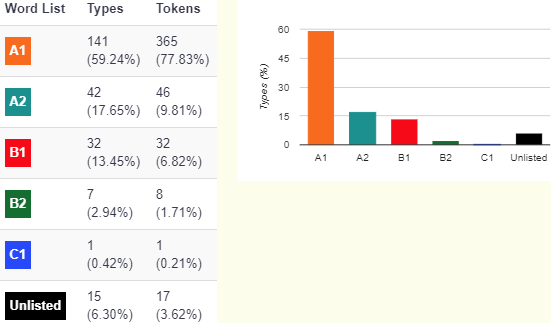

Now we get to places where intuition serves us poorly. The B1 learner had 10% of their language from B1 level language. This does not translate when we move to higher proficiency levels. The C1 learner produces only a couple of C level vocabulary items in our imaginary 100-word paragraph. That’s roughly only two words that show evidence of a vocabulary range of about 5000 to 8000 words. So we shouldn’t be surprised when we look at a piece of writing and there is only one or two highlighted items at the level of the student. My own writing as a proficient user of the language often is the same. What we also notice is that there is now at C1 an increase in both B1 and B2 vocabulary items. Strangely enough, there is more demonstration of acquired language range from the B levels, at the C1 level. In other words, looking for proof of advanced proficiency we also need to look at a greater range of vocabulary at a few levels lower. This confirms the EGP vocabulary range points which often take lower-level grammar points and lower-level vocabulary items and then give them a combined higher level proficiency “mark.”

Vocabulary breakdown

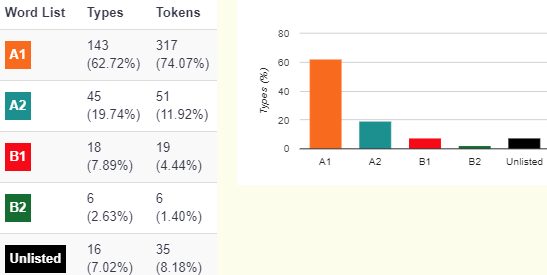

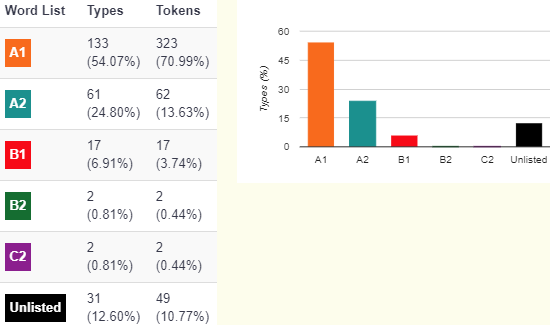

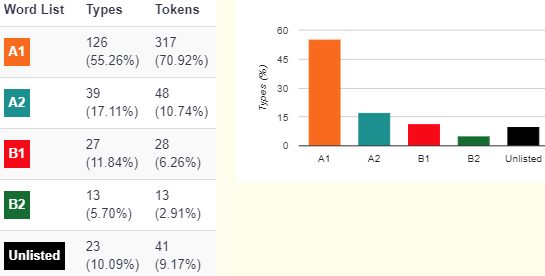

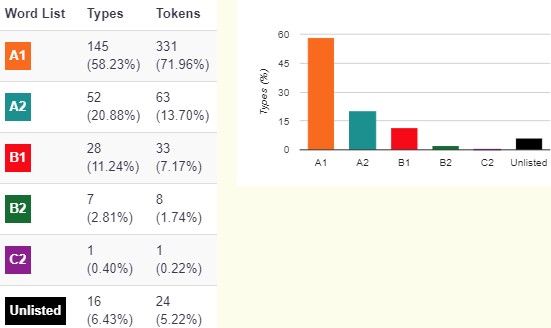

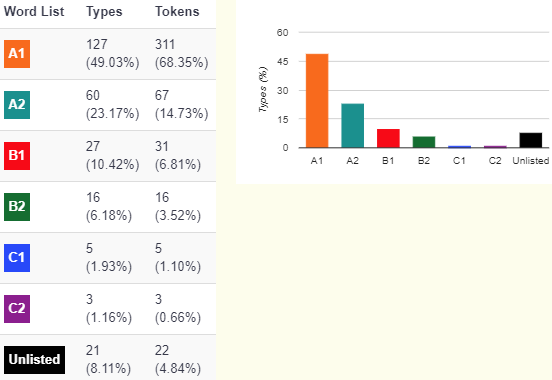

of a B1 student = A1 57.35%, A2 20.55%, B1 8.88%, B2 3.04%

These percentages change once we eliminate the “unlisted” items.

A1 = 62.72+54.07+55.26=172.05/3= 57.35

A2=19.74+24.8+17.11=61.65/3=20.55

B1=7.89+6.91+11.84=26.64/3=8.88

B2=2.63+.81+5.7=9.14/3=3.04

C1 = 0 (surprisingly)

C2 = 0.2%

unlisted is not considered here (since much of them were codes = FCE CAE etc.)

B1 level students (random concordances 20-40)

(write and improve) = C1

(40-60)

(WI= C1)

(60-80)

(WI= C1)

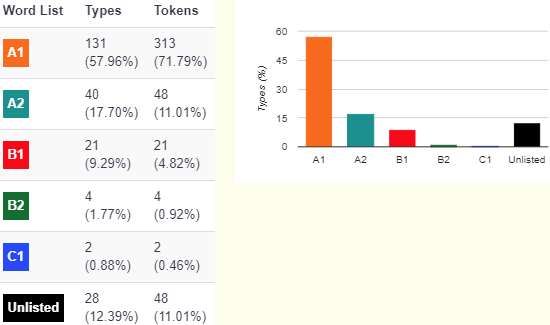

B2 students

Averaged A1= 58.48% A2= 18.74% B1= 11.3% B2= 2.5% C is under 1%

note I had to cut back the sentences to keep under 500 words. Word count should be similar though.

(WI=C2)

(WI= C2)

(WI = C1) a drop!

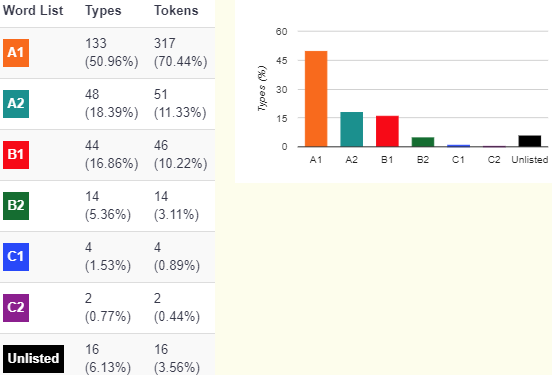

C1 students A1 50.37%, A2 19.94%, B1 14.31%, B2 6.08%, C1 1.52%, C2 0.89%

(WI= C2)

(WI= C2)

(WI= C2-)